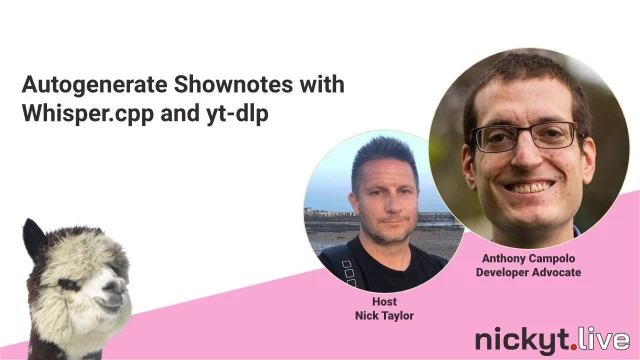

Autogenerate Shownotes with Whisper-cpp and yt-dlp

Anthony Campolo discusses his open-source project AutoShow, which automates the creation of show notes and summaries for video and audio content using AI tools

Episode Description

Anthony Campolo demos AutoShow, an open-source tool that generates AI-powered show notes, summaries, and chapters from YouTube videos and podcasts.

Episode Summary

Anthony Campolo joins Nick Taylor to showcase AutoShow, an open-source project he built to solve a personal pain point: generating polished show notes, summaries, and timestamped chapters for audio and video content. The tool chains together several technologies — yt-dlp for downloading YouTube content, Whisper.cpp for local transcription, and LLM APIs like Claude and ChatGPT for generating structured summaries. Anthony walks through the evolution of the project, from manually copy-pasting transcripts into ChatGPT to a fully automated pipeline that takes a YouTube link and produces SEO-optimized metadata in under a minute. During the live coding session, they run the tool on one of Nick's videos using both local Whisper transcription and cloud services like Deepgram and AssemblyAI, comparing the outputs. The conversation branches into practical topics like cost tradeoffs between local and paid transcription, plans for productizing the tool as a pay-as-you-go web service, and potential workflow automations using GitHub CLI for content creators. They also touch on the broader theme of AI engineering accessibility, arguing that building useful AI-powered tools today is mostly about scripting and API integration rather than deep academic knowledge, making it an exciting time for developers to experiment.

Speakers

- Nick Taylor

- Anthony Campolo

Chapters

00:00:00 - Introduction and AutoShow Overview

Nick Taylor welcomes Anthony Campolo to the stream and introduces the topic for the session. Anthony explains the motivation behind AutoShow, a tool he built to address the tedious process of generating show notes, summaries, and chapter breakdowns for the large volume of content he produces across podcasts, streams, and videos.

Anthony describes how he realized that feeding a full timestamped transcript to an LLM with a carefully crafted prompt could automate the creation of episode descriptions, summaries, and discrete chapter segments. He outlines the core technology stack — Whisper.cpp for transcription, yt-dlp for YouTube integration, and LLM APIs for generating the final output — and traces how the project evolved from a series of manual steps into a single-command automated pipeline.

00:05:11 - Demo Prep, Cost Discussion, and Previous AI Work

Nick acknowledges the chat and they begin discussing practical concerns like the cost of running AutoShow with various API keys and transcription services. Anthony explains the spectrum of options, from completely free local processing with Whisper.cpp to paid cloud transcription and premium LLM models that might cost a few dollars per episode, with cheaper alternatives available at reduced quality.

Anthony also demonstrates a previous use case where he ran AutoShow on Nick's coworking streams and fed the summaries into a LlamaIndex chatbot. The chatbot was able to accurately summarize Nick's recent work and outstanding tasks, showcasing how the tool can serve not just content creation but also work logging and meeting summarization — a flexibility enabled by attaching an LLM to large chunks of transcribed text.

00:13:17 - Open Source Vision and Product Plans

Anthony shares his vision for keeping AutoShow open source while building a paid product on top of it. The open-source repository will remain the base logic layer, allowing anyone to run the tool locally for free, while a web-based frontend will let non-technical users input a YouTube link, select their preferred services, and receive generated show notes for a fee.

He discusses the challenges of pricing and monetization, noting he has never built a SaaS product before. The conversation covers potential approaches like pay-as-you-go versus subscription models, the complexities of calculating margins across different transcription and LLM service costs, and his wife's encouragement to move toward a subscription service. Nick suggests pay-as-you-go as simpler to implement and less risky.

00:18:25 - Live Coding: Setting Up Whisper.cpp and Running Locally

Nick and Anthony begin the hands-on portion of the stream, opening the AutoShow repository in VS Code and walking through the setup process. They install dependencies, clone Whisper.cpp inside the project, and build the base transcription model. Anthony explains the project's dependency structure, including SDKs for OpenAI, Anthropic Claude, Deepgram, and AssemblyAI, plus Commander.js for the CLI interface.

They run the tool on one of Nick's short YouTube videos, encountering an error when the default large model flag doesn't match the compiled base model. After fixing the flag, the tool successfully downloads the video, extracts audio, runs it through Whisper transcription, and generates a markdown file with the prompt and transcript. They then manually paste the output into ChatGPT to demonstrate the original workflow before automation.

00:26:16 - Reviewing Output and Automated Pipeline with Cloud Services

They examine the generated markdown output, which includes a one-sentence description, a paragraph summary, and timestamped chapters. Anthony explains how the prompt can be customized for different chapter lengths and additional outputs like key takeaways. They then move to the automated pipeline, running the tool with Deepgram and AssemblyAI transcription services feeding directly into the Claude API, eliminating the manual copy-paste step entirely.

Nick reviews the output and notes its accuracy despite minor spelling issues with proper nouns. They discuss how transcription services like Deepgram and AssemblyAI offer configurability for removing filler words, custom word banks, and punctuation handling. Anthony compares the two services and mentions he personally prefers Deepgram but acknowledges AssemblyAI's momentum and funding advantage in the market.

00:38:40 - Code Walkthrough and Architecture Discussion

Anthony guides Nick through the project's code structure, starting with the main CLI entry point built with Commander.js and moving into the core process-video logic. They examine how the tool uses yt-dlp to extract YouTube metadata, how Whisper.cpp is called locally for transcription, and how the cloud transcription services and LLM APIs are integrated as alternative pathways.

Chat participants suggest improvements like using Google's ZX or Execa instead of raw execSync calls. Anthony acknowledges these suggestions and discusses other planned improvements including interactive CLI prompts using Inquirer, better error handling, and RSS feed support via a fast XML parser. Nick mentions the Effect TypeScript library as another potential improvement for structured error handling.

00:53:51 - Content Creator Workflows and Automation Ideas

The conversation shifts to broader content creator workflows. Nick describes his existing automation setup where he uses the GitHub CLI to create pull requests from scheduled content syncs, auto-merging deploy previews for his blog. He suggests a similar workflow for AutoShow where generated show notes could be submitted as PRs for review before publishing.

They discuss the value of repurposing content, the time burden of editing for solo creators, and why live streaming is attractive compared to polished YouTube production. Anthony shares that he used to spend ten hours editing podcast episodes, reinforcing the need for automation tools. Nick mentions his experience with Descript for audio editing and how transcription services can handle filler word removal.

01:05:05 - Future Plans, AI Engineering, and Closing Thoughts

Nick and Anthony discuss upcoming improvements to the CLI, including interactive prompts and a potential TypeScript migration that Nick volunteers to lead. They plan a future stream to tackle the conversion incrementally. Anthony reflects on how AutoShow is the first project he has built entirely from scratch and open sourced, contrasting it with his previous pattern of contributing to other people's frameworks.

The conversation closes with a discussion about AI engineering accessibility. Anthony argues that building AI-powered tools today is primarily about Node scripting and API integration rather than deep academic knowledge, making it approachable for web developers. They agree that despite cynicism in the industry, it is an exciting and empowering time to build software, and encourage viewers to experiment with the tools available. Nick previews upcoming streams and they sign off.

Transcript

00:00:23 - Nick Taylor

Hey everybody, welcome back to Nicky T Live. I'm your host Nick Taylor, and today I'm hanging out with my man Anthony Campolo. Anthony, how you doing?

00:00:32 - Anthony Campolo

Yo, yo, yo. Super stoked to be back and happy to chat about stuff I'm working on, some AI things and have some conversations about that.

00:00:43 - Nick Taylor

Well, cool. Awesome. I'm just gonna drop some links for places where people can follow you if they want to. So I guess, I'll bring us over to PairingView right away and we can just kind of jump into what we're gonna talk about today and we're gonna do some live coding too. So, so you created this repository called AutoShow, and I guess, why don't you break it down, what it, what it's for, and then maybe some of the tech under there. Like, we've got some, different models, for the LLMs and stuff. So I think it'd be good to kind of talk through all that, before we even jump into things.

00:01:21 - Anthony Campolo

Yeah, so this is something where I was solving an issue that I had myself where I create tons of content. Like I do written content, audio content, video content, you know, like I go on podcasts, I do streams and just like all this different stuff that I do and like both guest appearances and my own things. And you're, you're in kind of a similar boat. You've, you've done almost all the same content mediums that I've done. And yeah, you know, you can think about them in different ways. You can kind of just like, like some people, if they're just doing a stream, like when you're doing like a coworking stream. You'll, you'll have like a one sentence description and like your YouTube description and like a generic title. And then you'll just go and you'll film like an hour and a half of content. And then like, if someone wants to watch it, they can watch it. But that's it. That's just the whole thing. So the problem I wanted to solve is I wanted to be able to just like take a huge chunk of content and do a couple of things. I wanted to create like a good summary and good, like meta description. And then specifically chapters, because a lot of like, if you watch really legit podcasts, usually what they do is. They'll break down the show into like discrete 5 or 10 minute sections that kind of hone in on a specific topic, you know? Yeah. So I realized that you could use AI to do this if you were, if you had a whole transcript with timestamps, you could feed it. Once the context window got big enough for long enough conversations, you could feed it to ChatGPT or to Claude and basically say, hey, here's a transcript and here's what I want. I want this summary. I want these chapters. I want the summary to be this long. I want the chapters here to be this long. You could. You tweak all these things. You can even say, I want new title ideas. Like if it was a piece of content you didn't have a title for, or if you want like key takeaways or things like that. So I started just doing this and I first started using Whisper.cpp, which is a C++ version of Whisper, which is an open source transcription model from OpenAI. And that ended up being the first kind of base layer. And what I did is I just built a whole bunch of scripts around it. And then also added in yt-dlp, which is a tool that lets you interface with YouTube. Cause I was kind of thinking, you know, even if you have a podcast, usually your podcast will also be on YouTube. So like YouTube is like a kind of uber source of just content for so many people. And so I built out this scripting workflow where you would download, you would take a YouTube, just start with a YouTube link. And what you would do is you would download the video, convert it to audio, run the audio through Whisper transcription, and then take the transcription and stick a prompt on top that would say what you want the show notes to be. And then I'll feed that whole thing to an LLM, first ChatGPT and now I use Claude, and then I would copy paste back the response I got on top of the prompt. So then you would have the show notes and then the transcription altogether. So I was just basically, I was doing each of these steps kind of manually and then eventually built up workflows where you just give it a single command and it would get you all the way up to the point of having the prompt and the transcript. Now, just yesterday though, and I did this just for you, it's just for this stream, it's been this thing that I've been getting all the pieces together to where what you really want is you wanna be able to feed in a transcription service and okay, an LLM API so that literally you just give it, there's no manual steps whatsoever, is actually fully automated. So you can just start from a YouTube link and then get everything generated for you right on the spot. And then all of a sudden you have this show notes and it's like literally within a minute you have this like SEO optimized thing for your audio or video content. So that was a very long description, but hopefully that all made sense.

00:05:12 - Nick Taylor

No, it's all good.

00:05:12 - Anthony Campolo

It's all good.

00:05:13 - Nick Taylor

Also, thanks, Nate Codes for joining us today. Met Nate at Render ATL last year. He's, he's on mobile at the moment, but he's, he was curious about the project, so.

00:05:24 - Anthony Campolo

He's bookmarked. Oh man, thank you, Nate. Happy to see you here.

00:05:26 - Nick Taylor

It's been a while. Yeah. So no, this sounds pretty cool. And this is like, I think the way a lot of projects start, you know, it's like, this thing's annoying me or I need, you know, I've done these things, but it's become tedious. So like, you know, you kind of scratch your own itch and boom. And, and so you've open sourced it for now and.

00:05:49 - Anthony Campolo

Yeah.

00:05:50 - Nick Taylor

I guess I'm curious, like we're definitely gonna do some live coding and see this in action. I'm, I'm curious, like what's, let's say like for example, like this stream is typically about an hour and a half when I have a guest. What, what would be like, say the cost of that if I'm using my own like API keys and like, you know, there, there's a few services in here, like, so the transcription, there's different models. So like, we've got an OpenAI API key and stuff, like just, I guess, just to kind of gauge like what's a potential cost of this.

00:06:22 - Anthony Campolo

I don't know if you have a number. Yeah, it's a really good question and it's a, it's a extremely hard question to answer because once you in— so, so let me explain how the way it's set up right now, because I started it where you could do everything locally originally, it, there was no cost. So I first set the tool up in a way where as long as— well, technically there's a cost depending on— because I was using like a— I have a paid subscription to Claude and ChatGPT. So I would use just my monthly subscription and kind of like my own kind of limit to just feed it these show notes. But there's— so there's multiple trade-offs. There's trade-offs along the transcription route because you can do the transcription totally for free on your own machine if you're okay figuring out how to download Whisper.cpp and build a base model and work with this whole C++. TOLs toolchain. That's not very portable. If you want to use a transcription service, you then have two different trade-offs, which is there's varying models within the transcription services themselves, that there's more expensive ones that are better and cheaper ones that are crappier. So you can get— you can kind of try all the crappiest stuff. And this is where I haven't really done— I haven't really done this yet, where I'm going to have to basically run like a 20 Matrix benchmark where you're going to use different transcription services, different models of transcription servers offer, and then different LLMs, because then the LLMs also have the same trade-off where there's cheaper LLMs that can take more text for less money and go faster, but they'll give you worse outputs than the more expensive ones. So I had been using the best transcription I could get locally which is basically as good as many of the paid services will give you at this point. Like Whisper, open source Whisper model is really, really fricking good. So if you can just run that on your machine, that's honestly the best thing to do. And then I always use the very best model I could get my hands on, which for a while has been ChatGPT. And then there's a case to be made for why Claude 3 Opus, I think is probably the best one to use right now. But those, if you're using them through an API key, those can get pretty expensive. So you may end up spending like a couple dollars per episode. So not that crazy. But if you go the cheap route, you could get like, you could do the transcription and the LLM part for like 5 to 10 cents for like an hour long episode. Like you can do, you can do it very, very cheap. It's just kind of a question of like, how good does this output need to be? And also, are you like publishing this output or are you going to run this on 100 episodes and then stick that all in a vector database so you can like query it. That's also an option. Then you can kind of go with a slightly degraded version is you just want the raw, like, summaries in there, even if they're written kind of awkwardly.

00:09:08 - Nick Taylor

Yeah. And I guess that the putting in the vector database would be super helpful if you wanted to develop some kind of search over time, or, you know, like, not, not necessarily embedding like a, a chat-like experience in your, in your website, but, but you could have something like that.

00:09:26 - Anthony Campolo

Like, you know, that's what I showed you last time. So, so our last episode when we did the AI frontend, that was, I kind of did this in reverse where I was showing you once I had already used that tool to generate some summaries for your episodes. We had done that. Oh, and actually I wanted to share this with you.

00:09:41 - Nick Taylor

I got it here, I think.

00:09:41 - Anthony Campolo

Discord.

00:09:43 - Nick Taylor

Yeah.

00:09:43 - Anthony Campolo

Beforehand. So I, cool. You, you said you, I had run them on your episodes where it was some of your guests, but you're actually, you're like, oh, we should do this on. Are your coworking ones. So I just sent you two screenshots in the Discord. So what I did is I fed— I ran— so I ran AutoShow on your last 10 coworking streams, basically over— or not— basically over the last month, I think, over the course of May, and then gave those summaries to the Llama Index chatbot that we created. And then I asked it basically two things, the, like, what recent work has he been doing over this month and then what still needs to be done. So you can read this and let me know if this actually makes sense for what you've been working on.

00:10:29 - Nick Taylor

Yeah.

00:10:29 - Anthony Campolo

I'd be curious.

00:10:30 - Nick Taylor

Yeah. So I, I, I shared one on the screen there so people, watching the stream can catch it. So, Star Search feature enhancement. So that's a new feature we built out at Open Sauced using, large language models and, and GitHub data and. Yeah, I was debugging a carousel component. Yeah, that's right. Okay. Yeah, I remember this episode and then, yeah. Then there's the issues table. Yeah, no, that, that definitely checks out. And let me just copy the other one just so we can go take a peek. Yeah. Finalize the implementation. I ended up getting busy with other stuff, so my coworker Zeyu ended up doing it, but, But it wasn't any tasks.

00:11:12 - Anthony Campolo

That's correct.

00:11:13 - Nick Taylor

Yeah. Cool. Yeah. Did some, yeah. Did some screen testing sizes and code cleanup. Yeah. No, this is, this is, I would say pretty accurate. So that's pretty cool.

00:11:26 - Anthony Campolo

I also dropped in the, and so this is a, and this is a whole different use case from what I was doing. I've been wanting to create, you know, summaries of like content, but for you, this is like a log of the work you've done and the work that still needs to be done. This is like you're, you're like having a meeting summarized for you, which is like an entirely separate use case. That it can just kind of do because of the flexibility of having an LLM attached to this huge chunk of text.

00:11:51 - Nick Taylor

Yeah, no, totally. I'm just dropping the— our previous, stream, just dropping it on YouTube and on, just let me just drop it in chat here as well.

00:12:02 - Anthony Campolo

Cool.

00:12:03 - Nick Taylor

Yeah, no, so yeah, if, if folks check that last one out too because it ties into this, like Anthony was saying. And what's going on over here?

00:12:13 - Anthony Campolo

Okay, cool.

00:12:14 - Nick Taylor

All right. Sorry, just checking out chat and stuff. It's a little, I, I'm fine multitasking with the chat. The thing is you can't, like I'm using Restream, but even like StreamYard, you can't post messages to like Twitter yet, or X, like when you have a livestream, you have to go over there.

00:12:32 - Anthony Campolo

Oh, X. Yeah. But what's funny is their messages now come in. On, on, on StreamYard. So I just re— I just realized this cause I was doing a stream yesterday for the first time. Someone commented on Twitter and it came in through StreamYard. And so I went into Twitter and responded in the chat through my own account.

00:12:50 - Nick Taylor

Yeah, that's, yeah, that's what I did. But like, yeah, same for me. I'm using Restream, but I, I, I will see like LinkedIn messages or like, Twitter or X. It's just you can't respond. And I'm, I'm guessing the a— there's no API for that. Yet maybe that's exposed publicly. Maybe that's, I don't know, something that's StreamYard pipes in LinkedIn messages.

00:13:10 - Anthony Campolo

I'm not sure. No one's watching. Actually, that's not true. My sister watched me on LinkedIn once.

00:13:17 - Nick Taylor

Cool. Cool. Okay.

00:13:18 - Anthony Campolo

So, and so this is like also just to real, real quick, I wanna talk about the open source.

00:13:23 - Nick Taylor

Oh yeah.

00:13:23 - Anthony Campolo

Yeah. This and, and kind of my, my vision of, of where it could go. Cuz it was really important for me that I wanted to build this tool out in an open source way. But honestly, like, I ended up having multiple people in my life at various points in time as I was explaining it and like showing it to them and like using it. People were like, why aren't you charging for this? Like, why aren't you making this a product? Because they— I found a lot of people who found a lot of use for this in like weird, different kind of unique ways. So what I'm kind of thinking right now is there's going to be this open source repo. This is always going to stay open source and this will be basically like the base logic. So if anyone wants to do this and wants to generate this stuff totally for free, even with open source models, because the next step I need to do is integrate Llama CPP so you can do the LLM step locally as well. That's the one piece that's missing right now. But, that'll all be there. And then I'm going to build a front end that will allow basically non-tech savvy people, people who don't know how to clone down repo and run a CLI, will be able to just input like a YouTube link on a form, click a button, pay however much, and they get it, get it back right there in, in a UI. So that's where this is eventually going. So I think I can kind of still keep it as an open source thing that I get to work on in public, but then have a part of it that can be monetized in some way. So I've never built a product before. We were talking about this kind of before, like SaaS and stuff like that. And it's, it's really, it's really great. Like it's, it's first, I've never really built a legit open source project. It was like, I've done a lot of open source work. I contribute to open source frameworks and things like that. And that's something I've done for.

00:15:01 - Nick Taylor

Yeah.

00:15:01 - Anthony Campolo

Yeah. A long time. That was always me finding a cool project and kind of like glomming onto it, you know, and finding interesting people who are doing cool work. This is the first thing I've built totally myself from the ground up as my own open source thing. It's got 8 stars right now, which is 8 more than any of my other repos. So that's, that's pretty cool. And it was in, the Node Weekly newsletter. Oh, Peter, Peter Cooper puts out his, his whole slate of Cooper Press newsletters, and so he posted my, blog post. I wrote a blog post 2 months ago that does the very, very first implementation of it. You should pull it up, actually. Go to ajcwebdev.com.

00:15:39 - Nick Taylor

Okay, there we go. It's already in my history.

00:15:41 - Anthony Campolo

Just go to blog and then the second most recent one. So it's got similar titles what this, this stream was titled. So this shows you everything up to the point of Whisper.cpp and then using your own model if you just have a subscription on like ChatGPT or Claude. This does not include any of the transcription services APIs or LLM APIs that we're going to go through today. That's going to be a whole separate blog post. This is basically— this is how you do this entirely locally. And then what we're going to do today in our demo is going to be how do you do this with services that you're paying for?

00:16:19 - Nick Taylor

Okay. Yeah, I was curious too, like, like you want this to be a paid product, obviously. But still keeping it open source. Are you thinking it's gonna be like a website or are you thinking of like making a, like a small app instead? Like, like a, sorry, like a desktop app wrapped in like Tauri or like, Electron or—

00:16:41 - Anthony Campolo

The first thing would be just a website cause I've never even built a desktop app or a mobile app. So like I just would wanna start with what I already know how to do, which would be a straight up static website with, with some Jamstacky stuff that will hit a Stripe API, and that's going to be the, the whole, the whole deal. And maybe integrate a database just so people can like save their, save their summaries. But I would go, I would go real simple, just website dashboard, kind of like almost single page app type thing with like some very, very basic login and payment mechanisms. That's what I'm currently thinking, at least. I haven't built any of this stuff yet. Right now I'm just kind of—

00:17:18 - Nick Taylor

yeah.

00:17:19 - Anthony Campolo

Right now I'm integrating the APIs and the paid services and kind of figuring out like, how do I, how much do I even need to charge for this? Like if I'm exposing these different services to people, they'll need to pay the different models. I'll need to calculate based on how much stuff they give me, what it's gonna cost so that I can cover margins and can still make a profit. Cause I'm gonna be paying for the API costs, but I'm feeding it to these servers. So it was like all this stuff to figure out still. But, this is kind of where, where I'm at right now. And so it's already, it has a lot of functionality open source-wise, but building into a product, and this is what my wife has been talking to me. About this, she was actually like, she's like, how long would it take you to make it a subscription service? And I'm just like, 3 months. Like, like, you know, it's, it's gonna, it's gonna take a while, you know?

00:18:01 - Nick Taylor

Yeah. No, no, I, I, I feel you. But no, that's, that's super cool. And, I, I, I think this, I, you know, I think it's gonna be useful. Like, I mean, you've already shown me some of this before. Like I haven't like actually dug into the code yet cuz I, I only cloned the repo today. But, mm-hmm. I, I could definitely see this being super useful as a content creator. So, yeah, cool. So, so what do you want to do now? Do you want to just kind of— I've cloned the repo, I've set up, the environment variables for the API keys.

00:18:33 - Anthony Campolo

Let's, yeah, let's open it up in, in VS Code. And then what I'm going to have you do first, this shouldn't take too long, is I'm going to have you clone down Whisper.cpp and just build the base model, which is kind of crappy but should only take about a minute or so for you to build. All these instructions will be in the README.

00:18:52 - Nick Taylor

Okay.

00:18:53 - Anthony Campolo

Yeah. Okay.

00:18:53 - Nick Taylor

Let me open the README then.

00:18:56 - Anthony Campolo

README.

00:18:59 - Nick Taylor

Good old preview. Cool.

00:19:02 - Anthony Campolo

Yeah. So, let's scroll down. Yeah. So, do you already have those two installed on Brew?

00:19:08 - Nick Taylor

I'm sure you got FFmpeg.

00:19:10 - Anthony Campolo

Do you have yt-dlp?

00:19:12 - Nick Taylor

I'm pretty sure I do. But let me just run it just in case. While that's going on, we can chat a bit. The—

00:19:21 - Anthony Campolo

and then npm i, that's installing. Actually, go to the package.json so people can see what some of the dependencies are here. So it includes SDKs for two LLMs, OpenAI and Anthropic's Claude, and then two transcription services, which is DeepGram and AssemblyAI. Okay. And then it's got node-llama-cpp in there. That doesn't actually do anything yet. I haven't written that code. But eventually it's going to be able to reach out to a local LLM. And then, Commander.js. You know Commander.js?

00:19:59 - Nick Taylor

Yep. Yeah, that's for, just building, CLIs, right?

00:20:05 - Anthony Campolo

Yeah. Yeah. Yeah. So you technically ran two commands at once. You ran the brew command and then you had npm i after it.

00:20:11 - Nick Taylor

Yeah. Okay. It looks like, okay. It looks like Brew did not— yeah. I'm just looking at the Brew error. No such file. Oh wait, you're, oh yeah.

00:20:21 - Anthony Campolo

You're not in the right place.

00:20:23 - Nick Taylor

Oh, son of a— yeah.

00:20:24 - Anthony Campolo

Sorry.

00:20:24 - Nick Taylor

Yeah. Let me go up one. Auto show. Oh yeah. That, that would make sense.

00:20:36 - Nick Taylor

Cool.

00:20:36 - Anthony Campolo

Cool.

00:20:36 - Anthony Campolo

Yeah, that'll, that'll work.

00:20:38 - Nick Taylor

Yeah.

00:20:38 - Anthony Campolo

And then, go back to the package.json real quick. There's just one other dependency I wanna explain real quick. The FastXML parser.

00:20:47 - Nick Taylor

That, okay.

00:20:48 - Anthony Campolo

Is something I also just built here recently, or now you can feed it a podcast RSS feed. Cause it's previously just been working totally with, YouTube links. But now if you have a podcast RSS feed or just an RSS feed at all, with audio, it will now run this whole process on that. So I first created this for FS Jam, actually, and then I went through all these different steps to like build something with YouTube eventually. But now I can just run it and I'm gonna be able to run this on all 95 previous, FS Jam episodes.

00:21:24 - Nick Taylor

All right, let me clone Whisper here. So I'm just gonna—

00:21:27 - Anthony Campolo

so you, you want to clone it inside of AutoShow.

00:21:30 - Nick Taylor

Oh, okay, okay. What's the reason for that, just out of curiosity?

00:21:33 - Anthony Campolo

It's just because of the way it's a Node script that's calling out to multiple things on your machine, one of which is going to be yt-dlp and one of it's going to be this Whisper thing. So this just allows the path and the main command and the stuff to all kind of be in the right place. This is also why working with Whisper.cpp is the most complicated. dev-y kind of way of doing this. Most people are not going to do this. They're going to use the services. Gotcha. So you want to just— this entire time you just want to stay in the base directory as you're running these commands. Okay, cool.

00:22:10 - Nick Taylor

I'm just going to run them one at a time just so we can see things happening here.

00:22:16 - Anthony Campolo

Yeah, but you're already not in the right place.

00:22:18 - Nick Taylor

Oh, son of a—

00:22:19 - Anthony Campolo

you're not going to whisper cpp, so just stay in auto show.

00:22:23 - Nick Taylor

You literally told me what to do and I'm like—

00:22:25 - Anthony Campolo

Yeah. Well, I, I saw you, you had already done that after I told you. So that was one of the reasons why I brought it up. So cool. Cool.

00:22:32 - Nick Taylor

All right. So let's go ahead and—

00:22:35 - Anthony Campolo

so what that did, this, this built the simplest, smallest model, which is the, the base model. And if you really want to get a good transcription, you want to run the large model, but the large model takes 7 minutes to download. And then when you run it on an episode, it'll take like 5 to 10 minutes for an hour-long episode. This is gonna let us just run this on something real. So actually, I hadn't— I hadn't picked a video. Real quick, I'm just gonna go on your— your YouTube and I'm gonna find a video you have that's like 10 minutes or so. There's, SolidJS and Solid Start Explained. That's what I want. That's gonna be perfect.

00:23:08 - Nick Taylor

Okay, cool.

00:23:08 - Anthony Campolo

So I'm gonna give you this link.

00:23:12 - Nick Taylor

All right, I'll just grab that out.

00:23:14 - Anthony Campolo

This command is done, then you can run this. And you're gonna run it on the very first command in the section where it gives you all of the Node commands. So, run autogen Node scripts. The very first one is gonna be node autogen.sh --video. So, because this is a CLI, there's a video flag where you feed a YouTube video, a playlist flag where you feed a YouTube playlist. A URLs flag where you just give it a general list of URLs in a file. And then RSS flag if you wanna run it on RSS feed. Okay.

00:23:52 - Nick Taylor

So, okay. So I've got the link for the video up top there with Attila and okay. Yeah. And you were saying you can run multiple. Yeah.

00:24:00 - Anthony Campolo

Okay. Mm-hmm.

00:24:00 - Nick Taylor

So, let's just, let me stretch this up so we can get some more real estate here. Okay.

00:24:10 - Anthony Campolo

And it's gonna go through a couple steps.

00:24:12 - Nick Taylor

Okay.

00:24:13 - Anthony Campolo

And it's gonna print out each, it's gonna log each thing as it's going. So the first thing it's gonna do is it's gonna download a WAV file. So it's gonna take this YouTube video and it's going to extract the audio. And actually, sorry, first it builds a markdown file with the metadata from the YouTube video. So it takes the—

00:24:34 - Nick Taylor

oops, compiler error. Let's see what happens. I also just wanna say hey to B1 Mine in the chat there. Thanks for joining us.

00:24:43 - Anthony Campolo

Oh, I know what happened. Okay. I, I gave it to you with the default, which is to use the large model. So, okay, bump, bump up the command again and give it a flag for -m and then base. Yeah. So this is— so I've— so this is a flag that lets you configure the size of the Whisper model you're using.

00:25:05 - Nick Taylor

Okay. So let's run this again.

00:25:07 - Anthony Campolo

This should work this time.

00:25:10 - Nick Taylor

Cool.

00:25:11 - Anthony Campolo

And to be clear, base, medium, and large are the three things you can pass the -m flag. And, the, the command you first ran where you built the base model, you need to make sure you build the right one for that. So now we, we got the wave file this time.

00:25:26 - Nick Taylor

Oh, okay. I, I, I understand. Okay. I see what you mean. We didn't compile like the large model, so like it's not, it's not gonna run. Okay.

00:25:34 - Anthony Campolo

Got it. Yeah. So it, it didn't know what to do cuz it looked for their, the large model is 3 gigabytes, so that's why it would take a while to download. The base model's like 100 megabytes or something. So it looks into the Whisper. And this is why the Whisper.cpp needs to be inside of this repo, because it's basically calling out to a model that's within the Whisper.cpp repo. So that looks like everything worked. It says process completed successfully for URL prompt concatenated to transform successfully. So the content forward slash 2024-05-05, that's the date followed by the ID of the video because every YouTube video has a unique video ID. Okay. So you should be able to now go to the content directory and find this.

00:26:17 - Nick Taylor

Okay, so let's open this up here. Okay, so here we go. So this generated the markdown. So we've got, this is, this is some info about it.

00:26:28 - Anthony Campolo

And then we've got the transcript with timestamps and, and so this is the whole prompt. So you should read out the prompt and like what it's actually doing. So it's creating a one-sentence summary, a one-paragraph summary, and then the chapters. So this doesn't give you suggested titles and it doesn't give you key takeaways. Those are other— there's eventually going to have a prompt flag that's going to let you decide what you want to be included in the prompt. But right now it just kind of gives you this. And if you want to tweak it, you can go in there and just like change stuff. Like if you want the chapters, I said, I said chapters shouldn't be shorter than 1 or 2 minutes or longer than 5 or 6 6 minutes and it doesn't always follow that exactly. But if you wanted to have the chapters be 15 to 20 minutes, you could do that, you know?

00:27:14 - Nick Taylor

Okay. Yeah.

00:27:15 - Anthony Campolo

And then you give it the actual output and I have it create a markdown file with headers.

00:27:20 - Nick Taylor

Okay. Yeah, no, that's cool. Yeah.

00:27:23 - Anthony Campolo

Yeah.

00:27:23 - Nick Taylor

I was reading about this the other day. I'm taking the learning, I think it's learningprompt.org and they're talking about this is like a, this is like a, a one-shot. So you're putting an example into. Just make it very clear how you want the output.

00:27:37 - Anthony Campolo

In-context learning is the fancy term for it. Let's do this. I'm sure you have a ChatGPT subscription.

00:27:45 - Nick Taylor

Yeah.

00:27:46 - Anthony Campolo

So this is how I used to do things. I'm going to show you what I've been doing for months and months and months, and then we'll show the cool— how we can automate this. So just copy paste that entire file, dump it in ChatGPT and hit enter. Don't modify it all. Just copy paste literally the whole thing and give it to ChatGPT 4.0.

00:28:02 - Nick Taylor

Okay, so let's do this. Let me load it up. Okay, cool.

00:28:09 - Anthony Campolo

Literally copy this whole file and the entire file, every single part of it, every word. Yep.

00:28:14 - Nick Taylor

Yeah. All right, let's bump this up a bit and let's paste it in.

00:28:20 - Anthony Campolo

Boom. And what's cool is it could— this will work up to like a 2-hour long episode. ChatGPT used to crap out after like, you know, very small amount of time. You gotta make that a lot bigger for people to read it. But yeah, you should actually copy paste that into like your, okay, so yes, anyhow, let's just hit copy code.

00:28:40 - Nick Taylor

Yeah.

00:28:41 - Anthony Campolo

And then go back to the markdown file you had and copy paste that over the prompt. So leave the transcript, but copy it over the prompt, but not, and then leave the, the front matter as well.

00:28:54 - Nick Taylor

Okay. So the prompt is, which part of the prompt or this, this whole thing here? Like the run.

00:29:00 - Anthony Campolo

The, even though it says this is the transcript, the entire, every part of the prompt. Yeah. Mm-hmm.

00:29:04 - Nick Taylor

Okay. Including the example too, right?

00:29:06 - Anthony Campolo

Okay. Yep. Including transcript attached, all of it.

00:29:10 - Nick Taylor

Well, all right.

00:29:11 - Anthony Campolo

Yep. And so now, so get row transcript attached also. Oh yeah. So there's that. Yeah. So then you see how the output fits on top. So now you, it's just like this itself could be a webpage. So look at this in your preview mode so we can see it with the markdown. Yeah.

00:29:31 - Nick Taylor

Okay. So we got summary, chapters.

00:29:33 - Anthony Campolo

Okay. The episode.

00:29:35 - Nick Taylor

Okay. No, that's pretty cool, man. And obviously this is formatted, but it's, it's just a preview.

00:29:43 - Anthony Campolo

So like obviously, yeah, you can fix that if you, if you add 2 spaces at the end of each line or like, yeah. Double space it. That's, that's one thing that, that always bugs me that somebody need to fix in the, in the scripting workflow.

00:29:56 - Nick Taylor

Okay. But yeah, and, and then yeah, you can surface this with like, whether you like pop it in an Astro site or just like use Remark or, or whatever you, whatever you want in the front end there. But, no, this is super cool.

00:30:09 - Anthony Campolo

Yeah, I have, I have actually, I've, I did this with Ben Holmes when I showed him this tool. I built out actually an Astro website with a content collection that matches the front matter, because then you can just dump it directly. And I think that repo is also public. It's Astro AutoGen, I think is what it's called. Okay. This is before I started calling it AutoShow.

00:30:32 - Nick Taylor

Okay. So, but I think it's— this is pretty cool. And it's like pretty accurate. Like there's always like the classic, there's some spelling mistakes. Like it's Crab Nebula, but this is like a company name. So—

00:30:45 - Anthony Campolo

It struggles with Nikki T online. Sometimes it'll say Nikki, like N-I-K-K-I-E or N-I-K-K-I. So those are some things. There's ways to mitigate that. Whisper itself actually includes, and this is, and remember we use the base, so this is with the worst transcription model that we could be using right now, actually. Yeah. So the fact that it has anything at all that's readable is actually incredible because I would run the large model and this would have taken 5 times as long. It uses Delve. Yeah.

00:31:18 - Nick Taylor

Sorry, I had to call that out.

00:31:21 - Anthony Campolo

But it's funny, but you put the prompt, don't use the word Delve if you wanted to. Yeah.

00:31:28 - Nick Taylor

Yeah. I, I'm just thinking about this, like, like obviously this is useful for some, for like a content creator for sure. Like you even want to productize it. But I'm, I'm already thinking of use cases, like imagine I have like a website Uh, which most people probably will, that, that are doing content creation. Like I could, I could picture a workflow where, you know, using the GitHub CLI, I create a pull request from like, yeah, new episode generated, boom. You know, you get a deploy preview with like a, a PR and then you, you know, then you can like check it out, you know, and then you can like definitely do some cleanup if you need to. Like maybe you don't like some of the formatting, but, or, or you, you get the spelling mistakes like the crab Nabila here, for example. But I, I could totally see that as a workflow because like, I, like, cuz like right now I have a workflow in, not on my, not on Nicky T Live, but like that's just pulling in YouTube content and like, I have my schedule from Airtable. That's how that works. But like, my blog, I use Dev.to as like a headless CMS and whenever I make a change. There's no webhooks on dev.to. They removed them years ago and I can't remember the reason why, but, basically they're probably hard to maintain. Well, yeah, I guess so. Yeah. But basically I pull like once a night and I, they, they have an API, so like I just grab all my blog posts and anything that changes, it basically updates the repo. And then, so like my PR just shows the differences. And then as long as the deploy preview and like all the checks pass, I auto merge it. So like I could see maybe not necessarily auto merging cuz like you might wanna review this obviously. But like I could totally see that as a, a workflow. You know, that, that's I think kind of maybe out of the scope of your future.

00:33:20 - Anthony Campolo

No, I think that's, product. This is all, that's completely in scope and that's, that's something that I would want. And this is the thing, the, the whole point of this is, is automation. Automate as, as much as possible. Because when you're a solo content creator, and especially if you're, you're someone who's not actually like making any money on it, because like I did FSGM just as like a labor of love and to like make connections in the industry and to like keep myself sharp and like learn and like I had all these reasons for doing it. We never made a single dollar, you know? So the, you really gotta be able to do everything you can to, to save time. And once you can start to leverage these higher level AI tools, like the possibilities just completely open up. And so this, I, I love what you're, what you're suggesting right now. This is like right in line with the whole mission of the project.

00:34:06 - Nick Taylor

And like, I have the code to do this already, so feel free to poach it. But basically like, like the only thing that's missing is—

00:34:12 - Anthony Campolo

I'll put it in our Discord chat so I don't lose it. Yeah. Yeah.

00:34:16 - Nick Taylor

So basically there's two parts here. I know it's, it's not, it's not your, your project you're talking about now, but I can just show you kind of what I do. There's that and there's— So like I have a few things, but like, so I generate my dev.to post. So this is basically, I probably don't need.env anymore with Node 20, but anyways, right. Or is it Node 22? But essentially I'm, I'm hitting the dev.to API here and then, basically any changes that are there at the end. Oh yeah, this is just the Node script that runs. I can, this isn't really relevant to you because. It, your thing would just be hitting your API, but basically using the, the dev tools stuff.

00:34:58 - Anthony Campolo

Yeah.

00:34:58 - Nick Taylor

But, but there's things, cuz I've, I've done this over and over and cuz it's, this is something I had to do at Netlify. I had to sync Sanity with like a repo, a JSON file because like, for example, like, like Netlify has all these partners with like integrations and stuff. So like Sanity, Cloudinary and stuff. And Sanity is supposed to be the source of truth. So like for example, You know, like if Cloudinary updates their, I don't know if their SDK, they're gonna update it in Sanity, but that needs to be propagated to the repo because we use that repo for building things in Netlify. Well, when I worked there, so anyways, I kind of, I, I, I did this whole flow of like, how can I auto merge things? Because you have to change some of the policies on the project because you have to allow for auto merging. But, but basically you, you generate, I, I just basically take a timestamp to generate like all the PR information. So I create a title and then the branch name because it could happen like, you know, it's gonna be the same branch all the time. So I just put a date stamp in it so it's a unique branch. I switch to that branch and then after that I run a git add and like this is after my script is run cuz there's a GitHub Action that does these things. So like the GitHub Action runs and it says, okay, generate my dev 2 posts in, in the current branch. And then this just does a git add everything. And if there's nothing to change, so there's a, there's a check here. I, I got this, I forget where I found this, but basically if there is a change, then we commit it. Otherwise I just say there was nothing to update cuz like all the content got updated, but if there's like literally no changes, it's cause when you do git add, there's gonna be nothing staged. So, and, and essentially there, this, I, I'm just leveraging Git and the GitHub CLI here. So you can say, and this is stuff I learned about like about a year and a half ago, but you can create PRs with the GitHub CLI. So I'm passing like the title and then like a, just a body to say this is an automated PR, but, and then after that, you can, you can actually call the GitHub CLI to merge it. This is like automerge here. and then delete the branch automatically once it's done and also squash it, you know? And it's a, it's a workflow I use all the time now. And it's like, you know, in the event that one day if dev.to ever disappears, I'll have to change things up. But right now it's like I just go blog on dev.to and then once a night my site just goes and runs that GitHub Action. And so it's like I never have to do anything for my blog unless I'm updating like other parts of it that aren't the actual content. So it's a, yeah.

00:37:39 - Anthony Campolo

Anyways, man, I lived, I lived that Dev.to life for like 2 years and I was like all into Dev.to. I did all my blogging through Dev.to and then I took a brief detour into Hashnode and then there was things I didn't like about it that Dev.to had, but a couple things they had that Dev.to didn't. And then it was like I was ruined and I had to build my own blog. So there's no way I could get the features I wanted from both any other way. Yeah.

00:38:04 - Nick Taylor

Yeah. Yeah, no, I hear you. Yeah, I know. I mean, I'm, I'm biased cuz I used to work at Dev.to, but there's definitely compelling features in Hashnode. Like they, they've got an AI component to it now. I, I like that they had generated a table of contents.

00:38:18 - Anthony Campolo

I don't know why Dev.to doesn't do that still, but that was the big one. Yeah, that, that was a big, it was that and that. And then just the styling just looked nicer. It looked more modern. It looked more like a, a newer, a newer kind of, kind of blog. But, the blog I have now actually looks more like a Dev.to post. I kind of went back to like that really old school kind of markdown look, but we're, we're way off track. We should actually look at some of the code that—

00:38:40 - Nick Taylor

yeah, yeah.

00:38:41 - Anthony Campolo

It's okay.

00:38:41 - Nick Taylor

Please, to be clear, it's the— yeah, to be clear, it's not just magic. Anthony didn't just wave his hands and poof, we, we got things working. Okay. So let me close the content here and yeah, I guess, yeah, we'll look at the code and start with the root.

00:38:57 - Anthony Campolo

Let's go autogen.sh. Which needs to be renamed to Auto Show. I used to call this project AutoGen. Sorry, not.sh..js. This started as a Bash script that turned into a Node script. The Bash script is gonna be phased out eventually. Let's not even look at that. Let's just look at the Node stuff.

00:39:17 - Nick Taylor

Okay. You could probably use the bun shell.

00:39:19 - Anthony Campolo

This is the Bash one right now. Close this file and go to AutoGen.js. Cool.

00:39:27 - Nick Taylor

All right, here we go.

00:39:30 - Anthony Campolo

So this is, this is Commander. And right here, this is kind of like the closest thing to like, just a docs if you want to see everything the CLI does. That's actually, it's very readable. So we've got a video flag. This I kind of walk through some of this already, to process a single YouTube video. A playlist flag if you want, if you have a playlist of YouTube videos. URLs if you want to just pick a bunch of YouTube videos just from the ether and put them in a file and just run it on that. And then RSS, which will feed an RSS feed. Then the, the model flag lets you select different size models. And you see at the end there it says large, so that's a default. So that's why when we first ran the command it broke, because you didn't build the large model and it tried to run that because we didn't give it a flag. So that's really important. And then we have two flags for LLMs, one for ChatGPT, one for Claude, and then two flags for transcription ones. We haven't done that step yet, but that was the next thing we're gonna show once we explain some of this code.

00:40:29 - Nick Taylor

Yeah. Oh, hey, Fuzzy Bear, is in the chat. How you doing, Fuzzy Bear?

00:40:33 - Anthony Campolo

Fuzzy Bear has heard me talk about this a bunch. He's been watching my weekly streams I've been doing with, with Monarch as I've been building this out, actually. So he, he knows all about this project. Cool, cool.

00:40:43 - Nick Taylor

I was gonna say one thing I was thinking about, cuz when we ran into that error, I know, I know you knew what the error was right away, but I wonder if you could improve it. So like, you know, before you run, like, oh, you haven't compiled. Well, I guess maybe cuz you're gonna productize this, it might not be necessary.

00:41:00 - Anthony Campolo

There's gonna be a lot of, yeah, there's a lot of error handling that can be, could be done. There's a lot of ways to make this a lot nicer. And those are, this is why I'm sharing this with people and trying to get some, some other eyes to help QA it. Cause, yeah, like I'm, I've been building out this whole thing just with ChatGPT actually, because I'd never built like a big-ass Node scripting project. I've never even used Commander before. So, okay. I'm learning a lot as I'm, as I'm going and error handling is something that I'm always kind of coming back to and trying to improve.

00:41:32 - Nick Taylor

Yeah.

00:41:33 - Anthony Campolo

So then, Sorry, I was gonna say Fuzzy Bear is making me misty.

00:41:38 - Nick Taylor

He's like, great to see you guys. I was like, I'm not crying, you're crying. Anyways, yeah, so sorry, go on. So you got all the options here.

00:41:49 - Anthony Campolo

Yeah, yeah, so that, that is just like checking to see— that's, this is just kind of logic. And, there's, there's gonna be a way to make this, cleaner, I'm sure. But, right now this is— so this is the base. And now go to the commands folder and the processVideo.js file.

00:42:06 - Nick Taylor

All right.

00:42:07 - Anthony Campolo

Yeah.

00:42:08 - Nick Taylor

Cool.

00:42:09 - Anthony Campolo

Yeah. So, this is doing the heavy lifting. This is what is processing the video. And for things like the playlist, there's also gonna be a file for processPlaylist. It basically just runs this a whole bunch of times on a bunch of videos in a playlist. So, this is kind of like— this is really the core logic right here. Okay. Cool, cool, cool. You could actually, zoom out just one, one bit so I can kind of see a little.

00:42:35 - Nick Taylor

I'm zooming in something else. There we go.

00:42:38 - Anthony Campolo

That's this. Yeah, that's, that's, that's the perfect size for me. So yeah, you get the process video and these like all the different things you can pass in. It takes the URL, the model, and then whether you want to use, an LLM or not. The MD content is where it's creating the markdown. So what that is doing is that's using yt-dlp. And this is something that I may— if I'm eventually going to want to publish this as an npm package, I'm not sure how well yt-dlp is going to play with that because it's technically a Python tool. That's why you have to, you have to brew install it. But anyway, that gets you the link for the episode, the name of the channel, the URL to the channel, the title of the video, the day the video was published, and then the thumbnail.

00:43:24 - Nick Taylor

Okay. Yeah, no, this is cool, man. I, I'm just thinking like you're talking about error handling and stuff. I, I hung out with, Mike Arnaldi on Monday, who's the creator of the Effect, TypeScript, library, AKA the, the missing standard library for TypeScript JavaScript, as they call it.

00:43:45 - Anthony Campolo

Oh, I was talking about Effect, man. Yeah, I need to, I was thinking about asking Dev to come onto my stream and explain it to me. I'm not a TypeScript person, so I have no freaking clue.

00:43:56 - Nick Taylor

Yeah, no, I was gonna say, yeah, I was gonna say like we could, if you want, we could go through. I'm still very brand new to Effect, but like it was, it was pretty interesting. Like basically there's like some patterns that are more like from say like Rust. So like you, you never actually, like you can have errors happen, but you can Basically there's like exceptions that you're expecting. Like for example, like say you hit the API and I don't know, there's a network error, so you can like add in retries and stuff. And then like, so basically, and, and this is all like in, in the standard library of Effect already. And then there's like, you know, I don't know, say you retry like 5 times and then after the 5th time it's like, it's just not, it's just not happening. Then you can actually say, like effect.orDie, which basically means like, okay, like it's, it's really like you, we can't do anything here, you know? And like, I, I think it could be an interesting, project to just try using it with like, mm-hmm. I don't know. I might, I might look into, I, I mean, I've cloned this. I might, I might throw up a exploratory PR just to see. I don't know. Cuz, cuz Yeah, cuz this is definitely interesting to me as well because like we were talking before the stream, you know, we're, our team's slowly becoming all AI engineers and this is definitely relevant. So stuff as it, you know, so, but yeah, anyways, so, okay, so we process the video, then we just write the file, the markdown and cool. Yeah, it's, yeah.

00:45:33 - Anthony Campolo

Okay.

00:45:33 - Nick Taylor

And then DeepGram here. Yeah. I guess, I guess talk about the transcription.

00:45:36 - Anthony Campolo

So yeah, so this is where— so look where it says whisper.cpp/main. This is the part where it will need to reach out to your local Whisper. So if you didn't clone down Whisper and didn't build a model, you could use the Deepgram or AssemblyAI flags and run the transcription through them. And that's what we're going to do next is show how to do that. And then also feed the transcription to an LLM directly so we don't have to do the step we did where we copy pasted it into a chatbot. So I gave you two commands in the Discord. These are both going to use Claude, but one's going to use Deepgram and one's going to use AssemblyAI. And these are— I'm just getting— I'm just kind of curious to see how they're going to compare to each other.

00:46:24 - Nick Taylor

Do you want me to run them in parallel or so?

00:46:27 - Anthony Campolo

Just run the first one. And then we're gonna have to rename a file after we do it, or we're going to get a name clash because it's not really meant to. All right, you can't just— meant to just pick one and do it and get your output right now. So we have now we're passing it.env. So we're talking about how you don't need.env anymore. That's because you can do the --env --file=.env. Super obnoxious syntax, I always forget it. Yeah, but, then you're passing it the Deepgram flag and the Claude flag and you already have your API keys in here for Deepgram and Clawz. So for people who want to follow along at home, you have to get API keys. You may have to pay a couple bucks for like some credits. Deepgram gives you $200 of credit right off the bat, but then I think it expires at some point, which is why you probably didn't have any anymore.

00:47:14 - Nick Taylor

Yeah, because, when, when my coworker Becca was—

00:47:18 - Anthony Campolo

unless you ran $200 of transcription already.

00:47:21 - Nick Taylor

Yeah, I don't think I did. I think I did a stream with her when she was working there and then like, that was like more than a year ago. So it, it definitely expired, I'm sure. Okay, so it looks like it ran successfully.

00:47:34 - Anthony Campolo

Yeah. So it shows it in the, in the, the output there, but let's just go to the files it creates to go back to content again.

00:47:41 - Nick Taylor

Yeah.

00:47:41 - Anthony Campolo

Okay.

00:47:43 - Nick Taylor

Oh yeah, it's not gonna be in the project. Hold on.

00:47:47 - Anthony Campolo

Content.

00:47:50 - Nick Taylor

Okay, so we got the Claude one here. Okay.

00:47:53 - Anthony Campolo

Yep. So this now, so now we see how this did everything all at once. It started with, it ran the transcript and you can see the transcript is the other file that was created. And that might actually, hopefully it overwrote the one you, or, okay. Actually look at, look, look at your other file really quick just so I can see what happened here. Yeah, this one. So, okay, so this. Is—

00:48:19 - Nick Taylor

so this didn't add them.

00:48:22 - Anthony Campolo

So this overwrote the one we had previously.

00:48:25 - Nick Taylor

Okay, gotcha.

00:48:27 - Anthony Campolo

Yeah, which is okay.

00:48:28 - Nick Taylor

Cool.

00:48:28 - Anthony Campolo

But, so that's why I was saying we should— so scroll down a little bit. Yeah, so I can see the transcript on this one in this— in the scroll down the file. Yeah, okay. Yeah, so, so this is the transcript that was created with Deepgram, not with Whisper or CPP, I'm pretty sure. Okay. And then it took this whole thing and then fed it to Claude. And then that's where the show notes in the other file came from.

00:48:55 - Nick Taylor

Okay. Fuzzy in the chat saying, can I see the exec sync method? He's suggesting using Google ZX. Here it is, Fuzzy.

00:49:05 - Anthony Campolo

I have heard of ZX. I've also heard of Execa. Do you know about Execa?

00:49:10 - Nick Taylor

Yeah, it's from Sindre. Yeah. Sindre, another package from Sindre.

00:49:17 - Anthony Campolo

Yeah. This has been my problem is whether I use Execa or the thing you're telling me about right now. So I need to make a decision. Yeah.

00:49:25 - Nick Taylor

Cool.

00:49:25 - Anthony Campolo

Cool.

00:49:26 - Nick Taylor

Yeah.

00:49:27 - Anthony Campolo

Yeah. That's okay. I appreciate the input, Fuzzy. I have heard of this. I have been told something similar by the internet's collective unconsciousness as I've been building out this tool. So, um. Yeah. If you know anything about Execa, I'd be curious if you're familiar with it. Otherwise, I might just go with— Cool.

00:49:46 - Nick Taylor

All right. So, let's try the Assembly AI one now.

00:49:50 - Anthony Campolo

And real quick, save those two files. Just drag them to like the root of your project. That's one kind of dirty way to get these out of the blast radius. And this is more error handling. I need to— so, when it's generating stuff, it It's not gonna just like overwrite files you, you already have. This is why I think a lot of these are still kind of quick and dirty right now. Cool. All right. All right.

00:50:12 - Nick Taylor

So we'll run the, what is it? Okay. This has got AssemblyAI now.

00:50:16 - Anthony Campolo

Yep. So that's the only thing that's different. It's still gonna feed it to the Claude LLM and, it's using AssemblyAI. Why don't we, real quick, just so people get some context, pull up like DeepGram and AssemblyAI's homepages. Okay, I'll let that run in the background, but, so, you already had a Deepgram account, so have you tried it? Did you ever actually use it?

00:50:40 - Nick Taylor

I did it in that stream like I was talking about. I think the stream we did, I'd have to check, we were working on— oh yeah, I think we were working on fixing the Deepgram browser extension, which could actually Do transcription, I think. I think that's what we were doing.

00:51:00 - Anthony Campolo

Like live transcription.

00:51:02 - Nick Taylor

Yeah, I think that's what it was. Or essentially, or no, it was talking about before the stream. Yeah. Okay, so there's that. And what was the other site you wanted me to load up?

00:51:12 - Anthony Campolo

Assembly AI. Okay, this is, this is the new news, the hot newness, the one that has all the money and all the—

00:51:19 - Nick Taylor

oh, site not found.

00:51:21 - Anthony Campolo

Just Google Assembly AI, whatever you landed on. It's not the their actual website.

00:51:26 - Nick Taylor

Oh, assemblyai.com. That makes sense..com is where it's at.

00:51:31 - Anthony Campolo

Yeah. Yeah.

00:51:32 - Nick Taylor

Okay.

00:51:33 - Anthony Campolo

So this is when, when you're looking for transcription services, this is like the, you know how like with vector databases, there's like, there's Pinecone, there's everyone else, or it's the same thing in the crypto world. There was Alchemy and then everyone else. So this is like, this is the thing everyone's using now, allegedly. I kind of like Deepgram a little bit more personally. but, this seems to have the most funding and momentum behind it, which, yeah, take, take that for what it's worth, obviously, you know, try, try them both out. I recommend there's another one I tried out, Speechmatic. That was Speechmatic, wasn't bad, but, it was just, it didn't have anywhere near the level of features and documentation that AssemblyDeepGround had. It was, it was pretty night and day. So I decided to stick with these two. Build out those integrations, and then I'm probably going to just keep it that way and then expand out more into the LLM world. I want to give more open source models, not just Claude and ChatGPT. And then I want to add Cohere, I want to add Gemini, I want to add a whole bunch of more, more models. So that's probably the direction I'll go. I think I'm just going to stick with these two transcription services for now because me personally, I'm not going to use either of these guys. I'm going to keep using Whisper CVP. On my own machine, but that's just not really feasible for a lot of people.

00:52:54 - Nick Taylor

Yeah. I'm wondering, like, if you were to use, I'm just, I'm thinking of like you building the actual product now, like with the website and stuff, like, I guess, I guess it wouldn't make sense to put that in Assembly, the Whisper.cpp, cuz it's not like you would fire it off and then I guess there'd be some background job and then it would let them know when it's done, or—

00:53:18 - Anthony Campolo

There is a way in which I can spin up a server that will just run Whisper.cpp, and I can use that as my own transcription API endpoint. That, that is a thing that I'm gonna probably pursue and try out. I'm not sure if that's what you're, you're suggesting or not, but that's one way where you're gonna be able to kind of have that available without needing it locally, and I can still kind of manage the cost of that in my own way. Like, I might— like, if I just have a DigitalOcean droplet, like, running that you can just run transcriptions forever. Like I might end up trying that out and see how that works.

00:53:52 - Nick Taylor

Okay. Yeah. No, but this is, this is pretty cool. Like, and again, this is like super useful. Like, you know, like, because part of the, the thing is like, you know, I know DevRel is kind of up and down right now, but like one thing, like, cause I've talked, I know, you know, B Dougie as well, my, my, my CEO. I always find it funny to call them—

00:54:14 - Anthony Campolo

I was looking at the Open Sauce page actually and saw you have a lot of, you have a lot of videos you could use this on.

00:54:20 - Nick Taylor

Yeah, no, I might take it for a spin, there, but like it, the, the thing I was gonna say is like, BDuggie's all about like, you know, in DevRel, like you've gotta create content and, you know, one thing to do there is what you're doing here is like repurposing the content, you know, like you did a, yeah. You did a podcast or you did a livestream like we're doing now, like it makes sense to convert this into a blog post or like, like I've done it kind of, I was using, what was it called? Sorry, Descript for a while. I use it occasionally now.

00:54:57 - Anthony Campolo

Descript. Yeah. Mm-hmm.

00:54:58 - Nick Taylor

Yeah. Like, cuz what I used to do, I, I used it for creating podcast episodes. But honestly, my, my podcast, I haven't basically pulled in any new episodes in about a year because it, it's kind of intensive for me to edit them. And I'm almost wondering if I should just put them raw and just leave it like that, you know, like it's not like I'm running Syntax FM or something.

00:55:22 - Anthony Campolo

This is why, this is why I really like live streaming, like stuff like this. Like we just go at the end, there's a huge chunk of content. It is what it is. Like, yeah, I, I got crazy with editing FS Jam. I used to spend like 10 hours editing FS Jam. Sham episodes. It was absolutely absurd.

00:55:37 - Nick Taylor

Yeah, no, that's, that's the thing. And like, like, part of the reason why I stream, it's, it's not because I'm lazy. It's just for me, it's like, you know, like, it's encouraged at work. You don't have to, obviously, but like, I, I was doing this before I was working there. But I like it because I only have so much time in the week to do something like this. So like, I would like to do like polished YouTube content at some point, but in my own schedule right now, it's just not in the cards, you know what I mean? Like, because even like a 5-minute video on YouTube could take forever. Like, you know, I mean, obviously like the bigger streamers like Primogen or Theo, they've got their own editors and stuff, so it's not them doing it, you know? But like, if I were to do that, you know, like, it's just— I just don't have time right now. So that's why I appreciate the live streams and like, you know, I'll definitely, now that I stream to multiple platforms, so I tried streaming to multiple platforms about a year and a half ago and then I ran into something where, I was using Restream and it didn't, it didn't, I think it was, I was streaming with, Mike from, Ionic, Mike H, what's his last name? It's escaping me anyways. I started the stream with him and then like something went wrong. And when you have a stream key for like a live event— yeah, Harrington, thank you. Yeah, Hartington, that's it. But when you have a live stream like this, like if this— if something went wrong with the stream, I can't restart it, because it's live already. So basically I, I got kind of turned off from the multi-platform stuff from that because it happened a couple times. But now it seems to be stable and I don't know, I think I just maybe have a better setup. So like I used to edit the YouTube videos before I would upload it to YouTube and now I just don't because like I'm streaming to YouTube as well right now, you know? And it's, it's also like a bit of better quality of life for me because I'm not editing anything now. It's just up there. But, but again, getting back to the, like the editing, like I guess one question I have, because like something like Descript has, which is a neat feature, but it doesn't work well all the time for speech is like taking out the ums and the ahs and like they have an auto remove feature. And I noticed, cuz at first I was like, oh, I'm just gonna do that for like, a podcast episode. And it's not bad, but in some cases it'll, it'll make, you know, like blips and stuff. And like, so when somebody's speaking, it just sounds unnatural.

00:58:08 - Anthony Campolo

Mm-hmm.

00:58:10 - Nick Taylor

But what, what I was getting at in my, my long-winded story there was like for the transcription part, like Does something like Deepgram or AssemblyAI get rid of the ums and the ahs in the transcript? Because like, obviously, you know, it wouldn't affect the reading, you know?

00:58:26 - Anthony Campolo

So they're highly configurable. One of the configurations is to remove filler words. I think both of them offer that. This is one of the reasons why I ended up throwing out Speechify or the Speechmatic. I always forget their name. There's all this stuff that those two offer, like they can cut out filler words, you can feed them a bank of words ahead of time so it will know how to spell things that it might trip over. You can configure punctuation. There's a whole bunch of stuff you can do. So right now I'm not— I haven't really kind of gone down that rabbit hole yet because for my purpose, because you just, you really just need a huge chunk of text to feed to an LLM. And even if there's like no punctuation whatsoever, like, oh, I don't give a crap. Like, it's just going to read the thing and extract the raw data of what's happening, and it can do that with very little human-type markings that you would need to actually like make something readable. So yeah, totally. But if I want the transcription to be something that would be like nice and readable afterwards, this is where these, these services give you a lot of power in that respect.

00:59:41 - Nick Taylor

Yeah, I'm just, you don't need to stare at the screen share right now because I'm looking at at us on the screen cuz I, I put us back to, to the, just the talking view. But, this is again, like just to reiterate, so you, you open sourced it, which I think is super cool. This is actually useful. Like, yeah, I know sometimes people just create projects just to, you know, do something. This is something that I'm terrible at. I, I, I can't remember if I was mentioning this before the stream, but like a lot of times I'm terrible at coming up with a good idea, so I tend to latch onto like, I find this this project interesting, I'm gonna go help that project, or contribute to it. You know, and I don't know, I've, I've cloned this obviously cuz we've been looking at it. I'm definitely gonna— to go around with it.

01:00:27 - Anthony Campolo

I, I wanted to show this to my content creator friends to see if they would be able to use it. So you were someone specifically that I wanted to like pitch this project to cuz I feel like this can be useful, useful for you both for your own personal stuff and for your work stuff as, as well. Yeah. So, if you play around with it, just like let me know and, I'll be super curious to see how it is.

01:00:48 - Nick Taylor

Yeah. Yeah. Yeah. No, I'm trying to think, so yeah. So when you, when you do packages, like if we talk through like kind of your ideas again for like productizing it, so you were saying you can spin up Whisper.cpp, no problem. So you can just basically send it a URL, I guess, or, or whatever the payload you need to go over there.

01:01:12 - Anthony Campolo

And, right, yeah. And what I'm thinking is just the, the simplest would be you would just have like an input form where someone could give a YouTube video and, yeah, or a playlist, and it would need to analyze it to know basically how long it is because the, the length is going to decide the cost because the length translates fairly well. It's like the number of tokens in a, in a Vegas lesson was like really talking super duper hyper fast for, for some reason. But, so then you would basically have different transcription services you could pick from and different transcription models you could pick from that would each have different costs. And then different LLMs you could pick from that would each have different costs. And I would need to have some sort of calculation on the backend that would basically let you pick these. And then I would spit out a cost for that. Or, yeah, I, so that would be like, single use. Then the next level would be I have to figure out how do I offer a subscription that lets people pay a monthly rate that gives them a certain allocation. And then that's, that's where it gets more complicated because then it's like, well, people will be paying for subscriptions and maybe not using it all. So you can make up some margins there. But if someone, if someone does sign up and uses every single amount they pay for every single time, then does that have to be totally worked out so that there's profits, you know? So that's why I said there's a lot of things to kind of work out there in terms of the actual monetization aspect. So single-use videos, it's probably the first thing I'll implement because I could calculate something where I know I'm gonna make a profit.

01:02:48 - Nick Taylor

Yeah, I, I almost wonder if like pay-as-you-go makes more sense. I was looking at like AssemblyAI and it's— I think they said like it's like 12 cents an hour, for example.

01:02:57 - Anthony Campolo

Like that's just for transcription, obviously, but Um, yeah, like that seems simpler to calculate. Yeah.

01:03:04 - Nick Taylor

Yeah. Because like, I, I feel like you potentially complicate your life if you said like, okay, it's, I don't know, $15 a month. Oh, you didn't use it this month. So like, I mean, you, I guess you could do that.

01:03:16 - Anthony Campolo